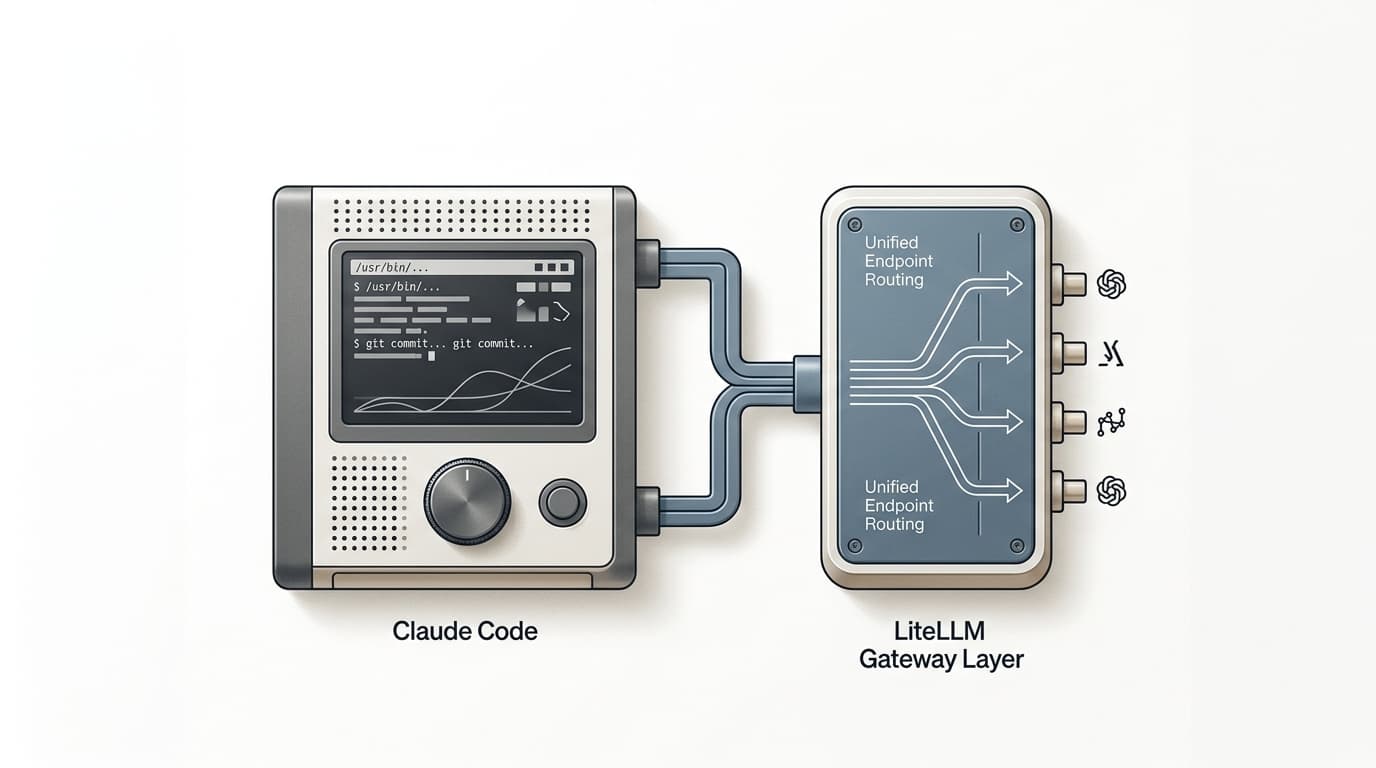

The Case for Multi-Agent Systems

Anthropic's multi-agent system outperformed single-agent Opus by 90%. The reason isn't better models. It's that intelligence degrades when you ask one agent to do everything, and improves when you let specialists work in isolation.